How I built this Blog, the idea and process behind it

How I Built My Personal Blog

I lead a busy life, but my passion for writing about technology, lifestyle, projects, and experiments drives me to maintain a personal blog. I've owned this domain for nearly a decade, yet finding the time to write consistently has always been a challenge. Many of you might relate to this struggle. The blog wasn't properly automated from a technological standpoint, and my commitments, especially to my kids, often took precedence. With so many interests, focusing on just one felt limiting to my lifestyle.

But let's set those thoughts aside for now and dive into the blog itself!

The Initial Setup

Initially, I built my blog using Laravel 5.6, a framework I was quite familiar with. I took pride in its implementation, but I neglected the deployment process, thinking, "It's just a blog!" In hindsight, that was a mistake. Every update or new feature became a painful task, especially as Laravel evolved. The release of Laravel 6 introduced significant architectural changes, and with Laravel 8, the complexity to upgrade to it only increased. I had to rewrite it from scratch but it wasn't really my main goal to be honest. I built so many functionalities and coding with AI wasn't yet a thing at that time, the old time of real coding! I had added an admin section with insights, metrics, user roles, and permissions, but as I added features, the blog became more complex and stressful to maintain.

At that moment in time, I had already written several articles, and surely the most-read articles, near-real-time data streaming from source to power-bi through Azure Data Factory 2020, decoupling infrastructure from LAMP/LEMP to docker 2021 and the Laravel custom identity management I implemented and wrote a technical article about it in 2017, were references to a bunch of engineers consistently coming back, as the average visitors of the blog were mostly interested in building data pipelines in Azure or implementing custom authentications in Laravel 5.6.

Thus I considered it not enough and I had to take an easy decision to cut the pipe, and eventually, I decided to shut it down.

Embracing Automation and AI

Over the past two years, I've immersed myself in AI, exploring new ways to automate processes, in general, not only for maintaining my blog. This includes monitoring complex metrics, analyzing user experience, and evaluating AI agents and servers. I wanted a blog that could self-sustain, allowing me to focus on the content without worrying about the operational overhead.

Thus, I embarked on creating an AI-driven blog. The vision was to enable anyone to contribute articles, not just me. I envisioned an automated review process where AI agents would act as a judges, evaluating the quality of articles and ensuring they adhered to blog policies.

Streamlining the Writing Process

One of my primary goals was to reduce the time spent typing. I wanted to be able to speak ideas into my phone, create notes, and have them sent to the blog. These notes would be queued, with a system in place to dequeue them one by one. Each note would be submitted to AI agents for article creation, followed by a review from the LLM council, and finally a human-in-the-loop review to ensure quality and compliance.

I'll write something about this approach in the next articles.

While I still need to review articles before they are published, primarily to mitigate compliance and legal issues, the time I spend on this is significantly less than in the past.

Simplifying User Management

To alleviate the headaches of user management, I integrated Auth0 as my identity management system. This setup allows me to manage roles and permissions with minimal effort, streamlining the entire process.

Auth0 has a free tier and it's fantastic for small projects like mine. I'll write something about this too in the next articles.

Robust Infrastructure

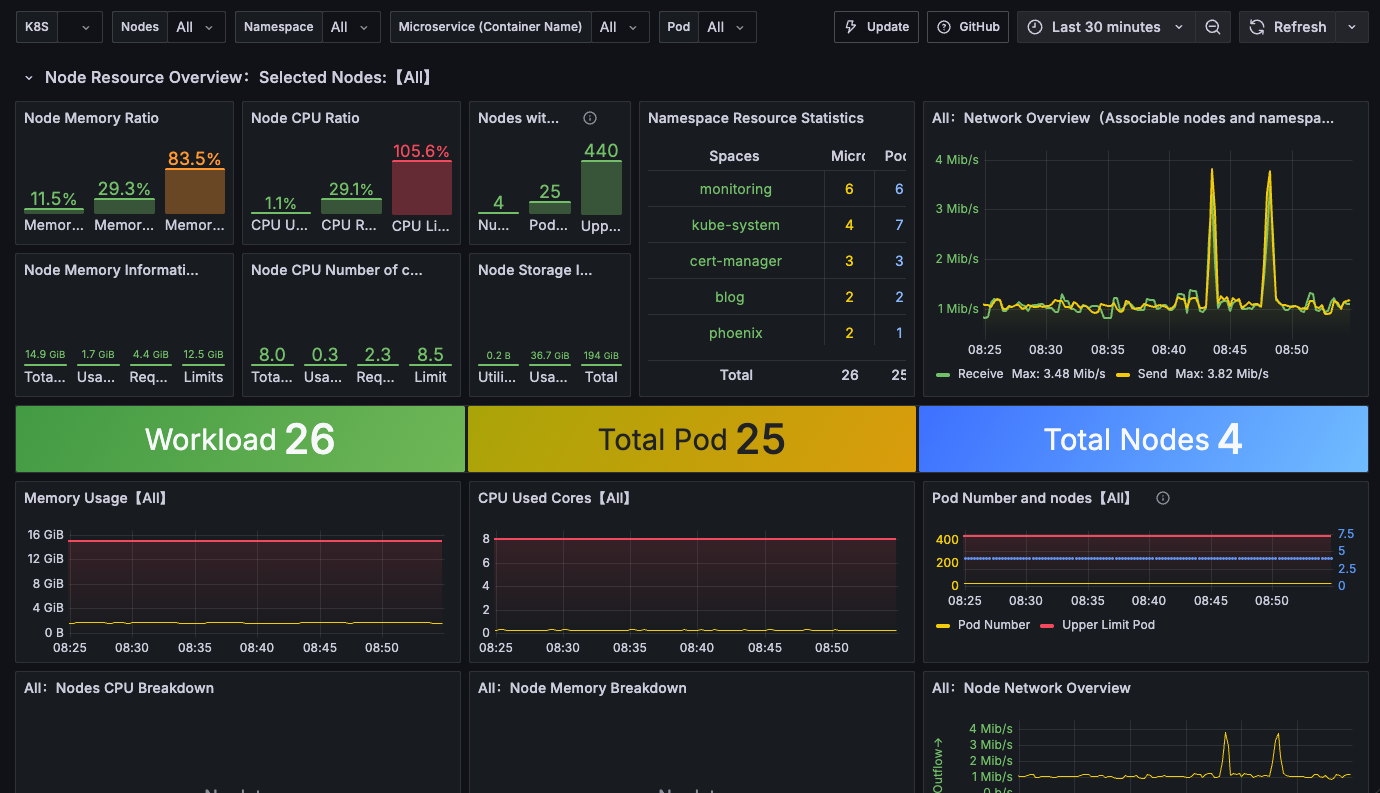

From an infrastructure perspective, I implemented a comprehensive Kubernetes cluster capable of supporting various components, including PostgreSQL, MinIO, Kafka, Arize Phoenix, Grafana, and certificate management.

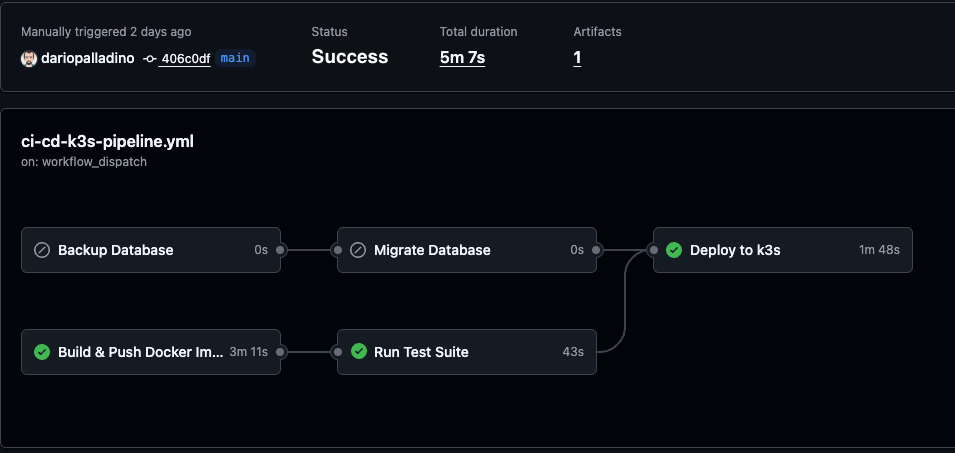

The entire deployment pipeline is automated through GitHub Actions, ensuring that every merge to the main branch triggers a deployment.

Additionally, I established a Claude Code environment with agents, skills, specifications, and a prioritized list of requirements for automated implementations. While I remain the human-in-the-loop, the time I spend reviewing everything to keep the solution current is vastly reduced compared to previous years.

This must probably be another interesting article, although there is already a lot about I believe every perspective counts.

Monitoring and Performance

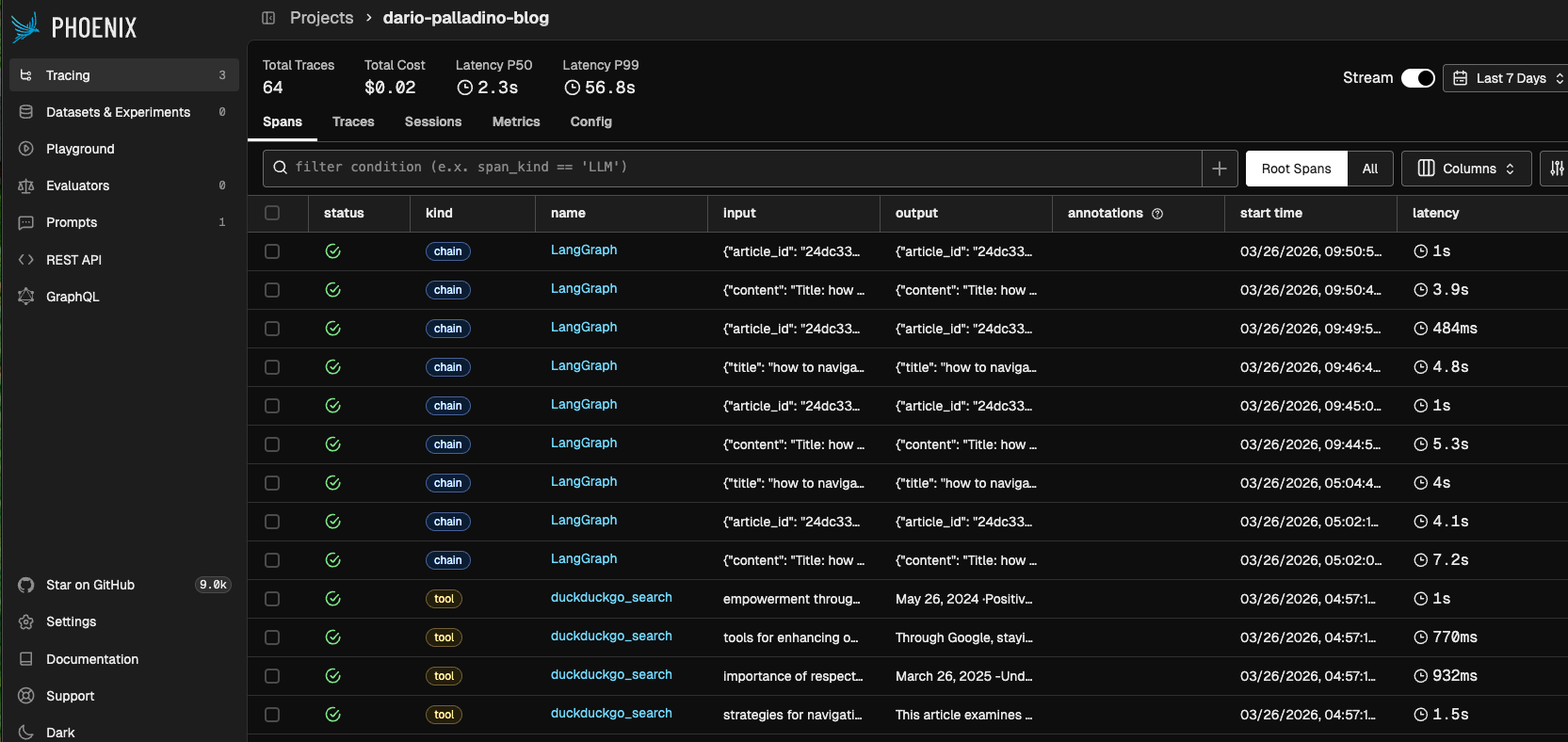

I enjoy reviewing how the agents perform on the blog using Arize Phoenix, the observability framework I decided to use, which allows me to monitor token consumption, build datasets easily to evaluate prompt performance, and more.

Grafana serves as my go-to tool for infrastructure monitoring.

I'll talk more in details in next articles what it is behind the scene.

By embracing automation and AI, I've transformed my blog into a more efficient platform, allowing me to focus on what truly matters: sharing ideas and insights with you.

You wanna Join?

If you're interested in writing for this blog, feel free to drop me an email with a brief background about yourself and your topics of interest.

Written by

Dario

Dario is a senior Data & AI / Cloud Architect and certified in PMP, TOGAF and SAFE with over 20 years of IT experience, specialized in AI platforms and data-driven architectures in the Azure Cloud.