Some years ago, I would have run my blog on a simple LAMP/LEMP installation on a single well equipped virtual server with a couple of GB of memory and a couple of CPU cores. Wow, how many years have gone since then! The wasted time I spent to recover my VMs after a kernel update for security patches that would impact my php or Apache installations. Every time, it was a pain!

Nowadays, my blog, dariopalladino.com, is running in a fully decoupled architecture based on single docker containers proudly hosted on DigitalOcean.com, and those wasted days have gone replaced with automated CI/CD pipelines.

Although Digital Ocean offered also a variety of PaaS services that would allow my stack to run easily on a Kubernetes cluster managed service, what I'm going to describe here is fully based on a IaaS service delivery model as I still prefer to be platform independent as much as possible.

With this article, I try to explain the basic architecture that would make your life easier in terms of maintenance and CI/CD of your existing blog. I will guide you through each step of having your LAMP/LEMP architecture decoupled with docker containers without adding the complexity of a cluster of databases, nor nodes and pods that would make this article too long and complex to follow along.

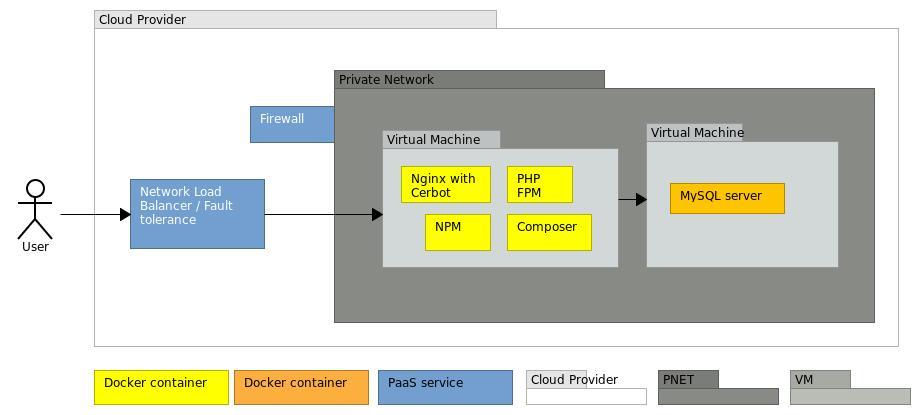

The architecture I'll be explaining here is a basic one based on a single Linux Virtual Machine with separated containers for:

- the Nginx web server with Certbot to automate SSL certificate management

- a single container for the PHP FastCGI Process Manager (also endowed with Composer)

- a single container for your MySQL database with the ability to deploy/restore your database by means of the entrypoint initial script

- a single container for an evergreen Redis cache for session management (optional)

- and yet one more optional containers for NPM javascript dependency managers.

The basic architecture, though, it's not what I'm running in production, and it's more the way I run my blog in my development environment. However, with few tweaks, this architecture can still be turned quite easily into a more well-structured decoupled infrastructure with a dedicated Virtual Machine for your MYSQL container (perhaps a cluster, where you can experience the most out of MySQL Cluster), a dedicated Virtual Machine for your Nginx proxy web-server (if you wanted to host more than one website), a shared NFS volume for your code repository and a dedicated container for the PHP FPM on a separate Virtual Machine. Nevertheless, this well-robust architecture would have its drawbacks due to the single point of failures on the Nginx and PHP-FPM containers. To make it a bit more realiable and highly available, a basic easeir docker swarm could make the trick. However, we also have to consider our approach costs wise, and the approach just described may not be as affordable as you're willing to spend for a non-monetizing blog.

In fact, running the basic architecture would be much more affordable in term of costs and maintenance if you had a low budget or you didn't really want to have a full fledged infrastructure. However, there are still other ways to have a proper disaster recovery procedure for your basic architecture, may you experience issues with your droplet or containers. It all depends on your SLO, Service Level Objective, and RTO, Recovery Time Objective, and how you plan to maintain your infrastructure. Not everything must be run in a K8 cluster, although it helps a lot! :)

With proper monitoring systems and notification alerts, you can be up and running again in a matter of minutes even with just one single VM. It really depends on your customer's expectations about your blog! However, for this basic architecture, I assume your customers are happy with a SLO/RTO of 24 hours because you cannot be always available for maintenance! Once you receive the issue notification, you may have 23hrs to react and 1hrs to make your blog up and running again with few automated Infrastructure-as-a-code deployments. This would have been harder if you'd had your old architecture.

However, the basic architecture I'm explaining here, is still endowed with a shared volume and a network load-balancer, may you decide to go for the more robust implementation, it will be easy to do so.

That being said, let's deep dive in. This is what we will achieve at the end of this lecture. In this drawing I'm still keeping MySQL server in a separate Virtual Machine as I really care on the separation of concerns, and the database to me is the much sensitive one. This is what I would recommend for a productive environment, at least. Though, for this walkthrough, to make things a bit easier, I'll keep everything on one easy deployment in a single Virtual Machine.

As aforementioned, I'll be using DigitalOcean during this walkthrough and offer you $100 for 60 days so that you can experiment with this walkthrough and if you like it, move forwards and test the Kubernetes PaaS service for a fully reliable, available and scalable architecture.

If you don't want to sign up with Digital Ocean, don't deter! Almost each of the steps explained here can be reproduced in any cloud providing a network load balancer and a IaaS service delivery model, with very small differences. I have also tested this deployment with AWS and it also is a very cheap solution if you prefer this provider. As I said earlier, being a IaaS service delivery model, I can deploy wherever I want without the risk to be locked into one or the other CP.

Then, before starting out with creating anything, stop a second and think about a naming convention for the resources you're going to deploy. I would suggest you to pause and think it thouroughly as this will be the backbone of your infrastructure, and the easier your naming convention is to recall, the better and the quicker it will be the manintenance of your services. I hope I've made my point clear.

Now, let's create a dedicated virtual private network or VPC, Virtual Private Cloud, that would allow us to add a first security layer to our environment. From Networking, create a VPC and keep it into the Region where you want your environment to belong to. Here you can find a very extensive guide.

Second, from the nice green button on the upper right, let's create a new volume. 10 GB are well enough for the images and our code repository, basically our /var/www shared folder! (not only!)

Great! Now, let's create our new Linux Virtual Machine, or Droplet, that must be bound to the VPC we created before. As we will need to install Docker on it, you can either decide to use an existing image from the Marketplace (Docker on Ubuntu 20.04) or just use the standard image available for Ubuntu 20.04 LTS and install Docker on your own.

Pick a basic plan, for the sake of testing this tutorial, I recommend the 5USD plan/month. Choose the same Region where you had created the VPC. Then, give it a proper hostname based on the naming convention you though about before, add the volume you had created and run the deployment. The new VM will be created with two interfaces, one for the public IP and one for the private one.

From the green button, let's create a Cloud Firewall that would strenghten our environment with a new layer of security. Give it a name that would make sense. Keep the SSH port enable from every IPv4 (and even IPv6 if you're already using it) considering to change SSH config on your droplet by allowing only connection through ssh key certificate. Add port 80 and 443 from every source. That's it. Now attach the Firewall to your Droplet and deploy it.

Last but not least, still from the green button on the upper right, let's create the Load-Balancer. Pick the small one, 10USD, and select the same Region as the other services so far. Add the Droplet you created before and allow port forwarding for HTTP 80 and HTTPS 443. We'll come back later to finalize this configuration.

The total costs of ownership, so far, is 16 USD per month, that is pretty much doable! If we decoupled the database from the rest of the current architecture, it would add up a small cost of 10USD (for a bigger droplet) bringing us to 26USD per month. Not bad at all!

So, now, let's login in the new VM we had created. I won't explain how to set up the whole virtual machine with ssh-key, hardening the whole environment and so on so forth, as this would be off-topic.

That being said, let's start the docker engine installaton if you chose to create your droplet out of the standard Ubuntu image. On the docker website, there is a very easy to follow step-by-step guide. Also, don't forget to install docker-compose!

Now that we have installed the docker engine, let's create a docker library folder under the newly mounted shared volume and let's change the docker deamon configuration file to have docker using this new path as its main folder. That's cleaner and it would allow you to backup this volume and mount it to a different droplet in case a disaster happened!

$> sudo mkdir -p /<your volume>/docker/lib

$> sudo vi /etc/docker/daemon.json

{

"data-root": "/<your volume>/docker/lib/"

}

Save and restart docker.

Now, let's create a docker network in order to ensure proper inter-communication between containers as commsnet, and a docker volume for data persistency for the database as dbdata. It can be any name as long as you adapt the docker-compose files in this GitHub repo to build up your decoupled architecture.

$> sudo mkdir -p /<your volume>/docker/platform

$> sudo chown -R <youruser>:<youruser> /<your volume>/docker/platform

$> cd /<your volume>/docker/platform

$> git clone https://github.com/dariopalladino/laravel-dockers.git <name_of_your_project>

Now, since this repo is meant to work with a Laravel framework code based blogs, all the settings are ready as it is. However, with very few changes to the nginx config file you can find in the .docker/nginx folder, you can make it work for whatever PHP framework of your choice, as well as Wordpress!

Assuming you maintain your code in a Git repository, I have added a start.sh script that allow you to sync your repo with the src/ folder that is bound to the /var/www folder of nginx and php-fpm containers. The start.sh script can be added to the PHP-FPM service entrypoint directive in the docker-compose file. Just ensure to update all the environment variable you find in the docker-compose.env file in the root folder. You should have something like this:

GIT_EMAIL={git_your_email}

GIT_NAME={git_username}

CLONE_REPO=

GIT_REPO=github.com/{your_repo_here}/{repo_name}.git

GIT_BRANCH=master

GIT_USERNAME={username_here}

GIT_PERSONAL_TOKEN={git_access_token_here}

GIT_USE_SSH=

SKIP_CHOWN=

SKIP_COMPOSER_UPDATE=1

REMOVE_FILES=0

SSH_KEY=

MYSQL_PORT_BINDING=3306

NGINX_PORT_BINDING=80

NGINX_SSL_PORT_BINDING=443

MYSQL_DATABASE={mysqldbname}

MYSQL_ROOT_PASSWORD={password}

Once you update these values, you can run the script to sync your repo and have all your code in the src/ folder. If you don't want to sync your repo through the start.sh script, all you have to do, is to ensure your project code is placed in a subfolder of the src/ folder, to have something like:

$> ls -lrta src/

src/<name of you blog>

src/<second blog>

src/<third blog>

You can do this by linking your code folder to the src/.

Very well! Other two steps to go: let's dump your MySQL database and place your dump into the ./docker/mysql/dump folder of the repo you cloned at the beginning:

$> mkdir -p .docker/mysql/dump && cp <your dump> .docker/mysql/dump

This folder is the entrypoint to the MySQL service and once the container is started, the entrypoint script will look for any database dump. This will automatically create your database with persistance in the docker volume created before.What it is still left, it is a good backup process that you can schedule on the host machine to dump your database with a good frequence based on the frequency your database change and the RPO, Recovery Point Objective, you define for your data loss business continuity plan, basically the data you lost between the last backup and the time your database is not available due to a failure.

The last relatively complext step is to review the nginx configuration that you can find in the ./docker/nginx/ssl/ folder. This file is almost ready to be used, but still a couple of proper amendements must be done. You can either rename this file to match your domain or keep it like it is. I would recommend to name this file to match your code repo.

Thus, edit the file and check the web root folder. I have set it up to be /var/www/public by default, assuming your code will be under /var/www and you are using a Laravel Framework that start up from the public folder. In any other case, this path should be changed accordingly.

The SSL certificate default path is /etc/letsencrypt/live/localhost/ where localhost should be changed to match your domain name.

The FastCGI_pass directive is pointing to the container named laravel-php on port 9000. Unless you haven't changed the name of the containers in the docker-compose file, you have nothing else to do. Otherwise, update it accordingly.

There are other configuration files that you may want to change, but for now I would suggest to follow along and once everything is up and running, come back and fine-tune.

From here on, it's all downhill! Almost! ;)

Let's run the docker-compose file, assuming you're using Laravel 7 or 8 or any PHP framework that would need PHP version equal or greater to 7.3.

$> docker-compose -f docker-compose.dev.v2.yml up -d

This command will pull docker images from Docker Hub and it will run the services defined in the docker-compose file ensuring dependencies are adhered. To stop the full stack, just run:

$> docker-compose -f docker-compose.dev.v2.yml down

If everything have worked fine, you should have something like the following:

$> docker container ls

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

bac6b4fb0f9a dariopad/nginx-laravel:latest "/docker-entrypoint.…" 2 days ago Up 1 minute 0.0.0.0:80->80/tcp, 0.0.0.0:443->443/tcp laravel-nginx

ef97ed4bc6bc redis:alpine "docker-entrypoint.s…" 2 days ago Up 1 minute 6379/tcp laravel-redis

a5604f914ae0 mysql:5.7.32 "docker-entrypoint.s…" 2 days ago Up 1 minute 3306/tcp, 3306/tcp laravel-mysql57

fab258cc8d1b dariopad/php-fpm-laravel:7.3 "docker-php-entrypoi…" 2 days ago Up 1 minute 9000/tcp laravel-php

The NPM container will execute and exit. That's why you can't see it in this list unless you use option ls -a.

For any error, just check the logs and adjust your configurations:

$> docker logs laravel-php

$> docker logs laravel-nginx

$> docker logs laravel-mysql57

If everything is up and running, the way to testing is by reaching to your server http://<your server public ip>/ , see if it's running and check the logs. As we enabled permanent redirect of http to https, you may have to accept the security prompt from your browser.

Once you fixed all possible errors, and your website is finally up and running, let's make the final changes to allow Nginx to go along with the network load-balancer for which we will finalize the configuration in a few seconds.

Edit Nginx configuration file and add proxy_protocol directive as follow:

listen 80 proxy_protocol;

listen 443 ssl proxy_protocol;

real_ip_header proxy_protocol

These changes will allow your application to receive the real IP address from the client. So, let's go back to the Load Balancer and let's change some parameters.

You should have set up the Load Balancer to accept all incoming connections to ports 80 and 443. That's fine. Let's keep it like this. This means that anyone can connect to your droplet using the LB public IP address instead of the one of the VM. Enable the proxy protocol and the healthy check to port 443 of your droplet. That's it for your Load Balancer settings! Remember that port 80 is forwarded to Nginx by the LB and redirected permanently to port 443 by Nginx itself.

Now, after you tested your webserver can be reached correctly from your droplet public IP, let's move back to the Cloud Firewall and let's change some basic rules. Remember that this Firewall is attached to your Droplet only. Thus, let's allow traffic to your Droplet web ports only from the LB. Then, let's keep only port 22 available for remote administration to your droplet and deny by default everything else. (I recommend to allow only ssh-key based authentication for your droplet). I usually tend to have a management plane that I use as a jump box to my private network and disable all public IPs on the droplets hosting services, but that may be another topic.

Thus, now you should have a Cloud Firewall fully setup, and only port 22 enabled on the public IP droplet interface. Finally, the Load Balancer up and running.

We just left one more step ahead. Set up the domain. So, now wherever it is your domain server, you have to change the IP used for your domain with the one of the Load Balancer. If you prefer, as I recommend, you can use the domain name service from Digital Ocean.

After everything is up and running, you may be experiencing still trouble with the SSL Certificate. Well, just jump into the Nginx container and run the following command. Please, consider that the letsencrypt folder should be bound to the share volume, so that you wont' lose your certificates may the docker containers be down or any failure to the host would happen. The renewal process is already scheduled in the nginx container and it will take care of keeping the certificate up-to-date.

$> docker exec -it laravel-nginx /bin/sh

$> sudo certbot --nginx

That's it. I hope you've been able to follow along in this long article and you understood a little the principles behing the scene. In fact, I hope this long walkthrough would enable your blog to go beyond the old school LAMP/LEMP architecture, and allow you to achieve even more reliability and scalability for a real production environment.

For enabling CI/CD pipelines and automate each process, I would use Azure DevOps, a full comprehensive tools, my Swiss Army Knife. I love it! However, there are many tools for doing the same, the fact is that, in Azure DevOps one can automate all the common CI/CD steps in one place. Anyhow, Ansible is another great tool for IT automations! That's just based on your own flavour and taste!

I hope you enjoyed this walkthrough and that was helpful to move forward and get rid of those old installations that are just too difficult to maintain.

Keep up all the good work and all the best!